Paper Episode 2: Building the Simulation Environment: Flight Dynamics, Sensor Models, and IMM-EKF Fusion

Building the Simulation Environment: Flight Dynamics, Sensor Models, and IMM-EKF Fusion

Part 2 of a series on adaptive autonomy switching in human-autonomous teaming.

Why Simulation Fidelity Matters Here

The autonomy governor makes switching decisions based on a single derived quantity: track quality , computed from the position uncertainty of a fused state estimate. Everything upstream of the governor flight dynamics, sensor models, tracking filters, fusion exists to produce a realistic pair whose covariance structure reflects genuine epistemic uncertainty rather than a hand-tuned scalar.

This is a non-trivial requirement. A governor calibrated against an overly optimistic covariance will appear adaptive when it is actually operating in a permanently high-confidence regime; one calibrated against an inflated covariance will be pathologically conservative. The simulation must therefore be physically grounded enough that responds meaningfully to the environmental factors we want to stress-test: sensor quality, threat tempo, and engagement geometry.

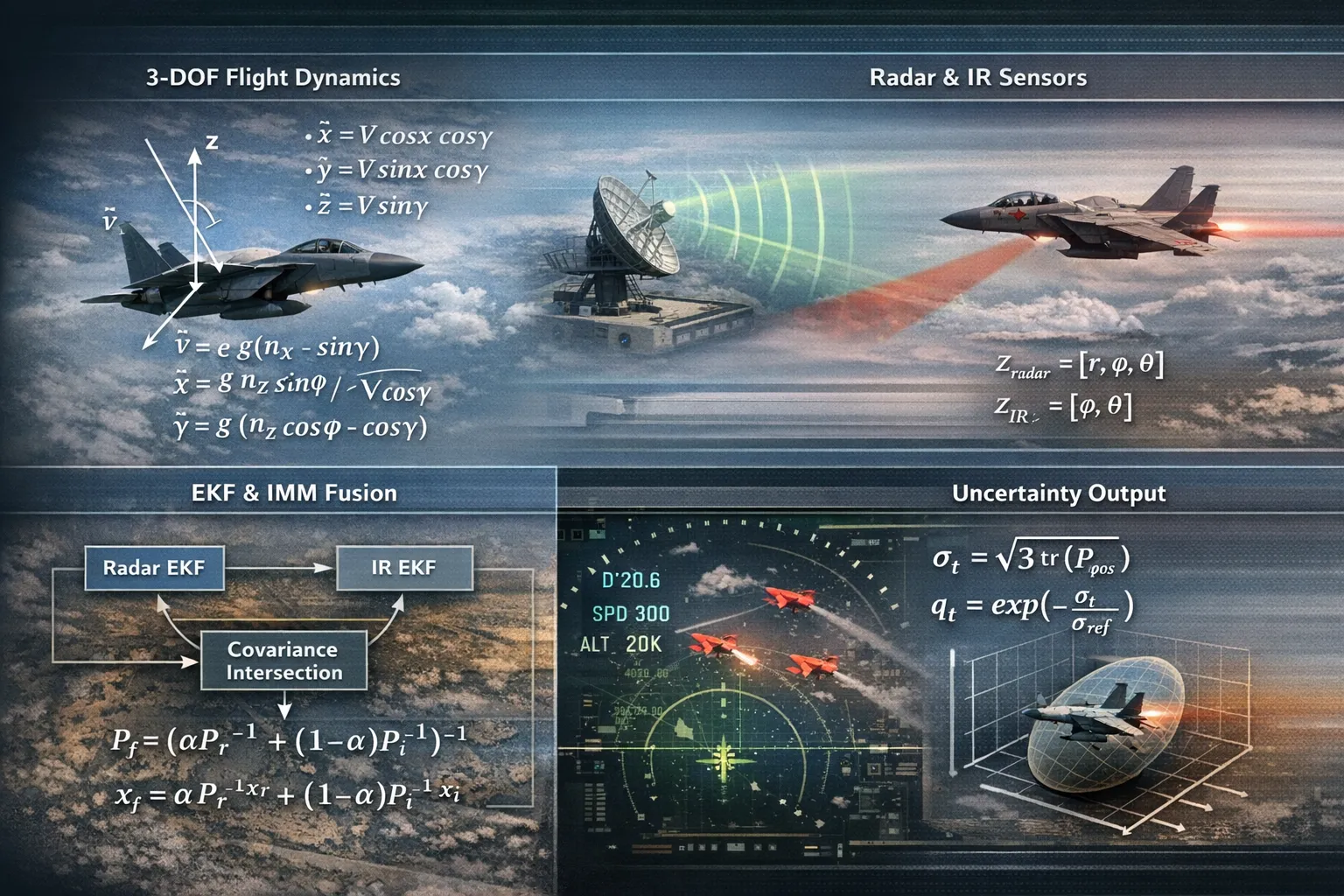

3-DOF Point Mass Dynamics

The flight environment is built on a 3-DOF point mass model, derived from a MATLAB simulation package and ported to Python. The state vector is:

where is position in a local NED frame, is total airspeed, is heading angle, and is flight path angle. The equations of motion follow directly from Newton’s second law applied to a point mass in coordinated flight:

The control inputs are the axial load factor , the normal load factor , and the bank angle . These are driven by an autopilot layer: separate PID controllers for speed, altitude, and heading track commanded references and produce at each timestep s.

Different platform archetypes are modeled and each parameterized by performance envelopes: , , and maximum bank and path angles. The fighter jet, used as the RED threat in most scenarios, has and m/s; its autopilot can execute sustained high- turns. This envelope determines the upper bound on threat maneuverability the tracker must accommodate.

The atmosphere model follows ISA troposphere: density and speed of sound are computed as functions of altitude up to the tropopause, providing altitude-dependent aerodynamic limits for each platform type.

Sensor Models

Two sensor modalities are implemented: a tracking radar and a passive IR sensor. Both operate in spherical coordinates relative to the ownship position.

Radar. The radar produces range-azimuth-elevation measurements:

with m, under nominal (HIGH quality) conditions; degraded (LOW quality) scenarios multiply these by a factor of 4. Detections are gated at a maximum range of 100 km. The radar is a range-complete sensor: it provides direct range information, which means its measurements can be linearized to Cartesian with bounded position error.

IR sensor. The IR sensor is angle-only:

The absence of range information creates a fundamental observability constraint: an angle-only sensor cannot initialize a Cartesian track without auxiliary range information. In the pipeline, IR detections are used to update existing radar-initialized tracks; an IR-only detection does not open a new track. This is enforced by the track management logic described below.

A critical implementation issue emerged here. A naive IR model that assigns a nominal fixed range to each detection produces at close range a geometrically impossible result that drives and locks the governor at regardless of actual tracking quality. The fix is an angular noise floor that maps angular uncertainty to a range-dependent position uncertainty:

ensuring that the effective position uncertainty grows with range, as it physically should. This single correction was essential to prevent governor lockup.

EKF Tracking

Each sensor modality runs an independent EKF tracker. The state model is constant velocity (CV) in Cartesian coordinates:

The process noise follows the discrete Wiener acceleration model:

where is the acceleration spectral density, tuned to match the maneuvering bandwidth of the RED platform.

The measurement update linearizes the spherical-to-Cartesian conversion at the predicted position. For radar, the Jacobian maps the full measurement to Cartesian increments; for IR angle-only updates, is computed with range held fixed at the predicted track range, and the update is applied only to the angular degrees of freedom.

Track management follows a standard M-of-N confirmation / deletion logic: a new tentative track is confirmed after 3 consecutive hits, and an existing track is deleted after 10 consecutive misses. This introduces a track latency that directly affects the governor: the system cannot act on a new threat until the tracker has confirmed it, which takes at minimum. At s this is 150 ms fast enough for the engagement timescales considered here, but a genuine design constraint.

The IMM (Interacting Multiple Model) extension maintains a bank of models constant velocity and constant turn with mode probabilities updated at each step via the mixing weights:

The mixed state and covariance are computed as weighted sums over models, providing a smoother covariance estimate during maneuvering phases than a single CV filter.

Covariance Intersection Fusion

The radar and IR trackers produce independent track estimates and for each platform. These are fused using Covariance Intersection (CI), which is consistent the fused covariance is guaranteed to be an upper bound on the true covariance without requiring knowledge of the cross-correlations between the two trackers:

The mixing weight minimizes ; in the current implementation it is fixed at as a computationally cheap approximation. The optimal can be found by line search; given the already conservative nature of CI, the approximation error is small relative to the process noise term.

When only one sensor has a confirmed track on a given platform, the single-sensor estimate passes through without fusion. When neither sensor has a track, there is no FusedTrack for that platform, and the governor receives no evidence which correctly forces toward zero and triggers a downward level transition.

The TrackOutput Interface

The output of the fusion stage is a FusedTrack dataclass:

@dataclass

class FusedTrack:

id: int

t: float

x: np.ndarray # (6,): [x, y, z, vx, vy, vz]

P: np.ndarray # (6, 6)

platform_id: int

This is the only object the governor sees. The autonomy layer has no access to raw detections, individual sensor states, or platform truth a deliberate interface boundary that enforces the separation between estimation and decision. The governor operates on alone, extracting track quality via:

The calibration of is the subject of the next post it turns out to be the single most consequential parameter in the entire system, and getting it wrong in either direction produces a governor that is observationally indistinguishable from a fixed policy.

What This Stack Produces

In a nominal HIGH-quality sensor scenario with a single RED threat at 40 km and closing at 300 m/s, the fused tracker converges to – m within 5 seconds of first detection. In a LOW-quality scenario (4× noise scaling on both sensors), the same engagement produces – m, with intermittent track drops when the IR sensor misses due to extended range gating. This spread is large enough to drive meaningful variation in and therefore in across the HIGH/LOW sensor conditions, which is precisely what the experimental design requires.

The multi-threat scenarios (3 simultaneous RED platforms) produce track cross-contamination during close passes when the assignment gating radius is exceeded. This is not a bug; it is a realistic failure mode that stresses the governor in a way that is difficult to reproduce analytically.

Next: Part 3 - The Governor: risk score construction, calibration, and the failure modes that took the longest to diagnose.